Run AI anywhere. Maximise GPU efficiency.

Deploy and govern large-scale AI—without complexity. From a single GPU to thousands, intelligently manage performance, cost, and reliability in real time.

Reduce GPU costs

Scale AI faster

Stay in control

Up to 2x

GPU Efficiency

Minutes

Time to Prod

1000s of GPUs

Scale Supported

Reduced

Infrastructure Cost

Turn AI Infrastructure into a Competitive Advantage

Most organizations struggle to move AI from experimentation to production—held back by cost, complexity, and lack of control.

This platform changes that.

Run anywhere

Deploy seamlessly on-prem, in the cloud, or hybrid environments.

Maximize utilization

Intelligently route requests to maximize GPU utilization and performance automatically.

Enterprise-grade security

Deliver secure, multi-tenant AI with granular governance and access control.

Eliminate complexity

A self-optimizing, self-healing system that abstracts away infrastructure management.

A New Foundation for Enterprise AI

Intelligent Inference Infrastructure

Automatically deploy and optimize models for maximum performance. Built-in KV cache and architecture-aware optimization across GPUs.

- Intelligent inference planner

- Support for disaggregated inference

Know exactly what to deploy

Large library of pre-analyzed models with performance and resource insights.

Manage at any scale

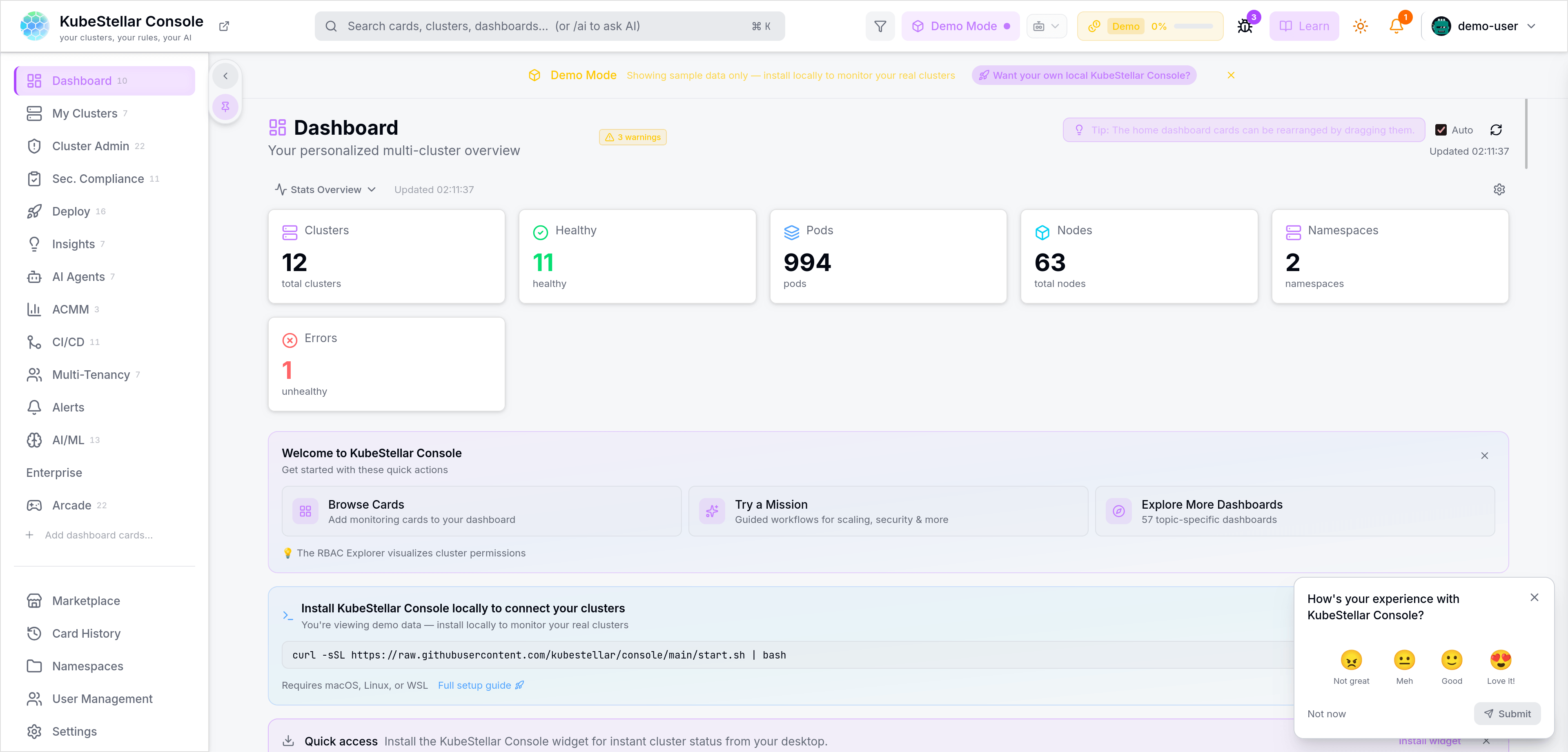

Deploy, scale, and monitor across thousands of GPUs from a single interface. Full visibility into VRAM, utilization, and capacity.

→ Syncing replica set: ai-gateway-prod

→ Desired: 12 | Current: 12 | Ready: 12

→ Scaling up nodes in cluster-alpha...

✓ Auto-scaling event complete

Built for Every Stakeholder

Enterprise AI requires alignment across the organization. We provide the tools each team needs to succeed.

Reduce Cost & Financial Risk

Increase GPU efficiency to do more with less hardware. Eliminate over-provisioning and enforce budgets with quotas.

Scale AI Across the Organization

Standardize AI deployment and governance securely. Avoid vendor lock-in with hybrid model access and future-proof infrastructure.

Operate with Control & Confidence

Deploy and scale from 1 to 1000s of GPUs easily. Predictive auto-scaling, intelligent scheduling, and multi-tenant access control.

Build and Ship Faster

Prototype, iterate, and deploy in one unified platform. Switch between models instantly and build advanced agents with RAG workflows.

Everything You Need. One Platform.

From infrastructure to developer workflows, every layer of AI inference is unified into a single system.

- Intelligent inference and optimization engine

- Centralized control plane and observability

- Developer platform for building AI applications

- Unified gateway for internal and external models

01 metrics-server: aggregating 5m windows... [OK]

02 gateway-lb: routing 4,200 req/s to cluster-alpha [OK]

03 auto-scaler: provisioning 2x A10G for queue buildup [PENDING]

04 auto-scaler: nodes ready, adding to pool [OK]

Ready to Scale AI Without the Complexity?

Reduce cost. Increase performance. Take control of your AI infrastructure.